I’m going to describe an occlusion culling algorithm I came up with about 4 or 5 years ago. I use it in my game and it has worked well for me.

If you do not know what occlusion culling is, it is culling objects that are in the frustum, but are blocked from the users view, and do not contribute to the scene.

Prior to this I used a Software Rasterizer based implementation, but I found that it was problematic for the following reasons.

- Low resolution: not a huge issue, but it is much lower resolution than the GPU rasterizer so it isn’t completely correct

- False Occlusion: since my occluders had to be auto generated at run time from arbitrary SDFs, I used a convex hull approximation which was not always conservative, this caused some false occlusion

- Memory: each occluder had its own set of triangles that needed to be stored. Also meant jumping around in memory to access each of these sets.

SDF Occlusion Algorithm Overview

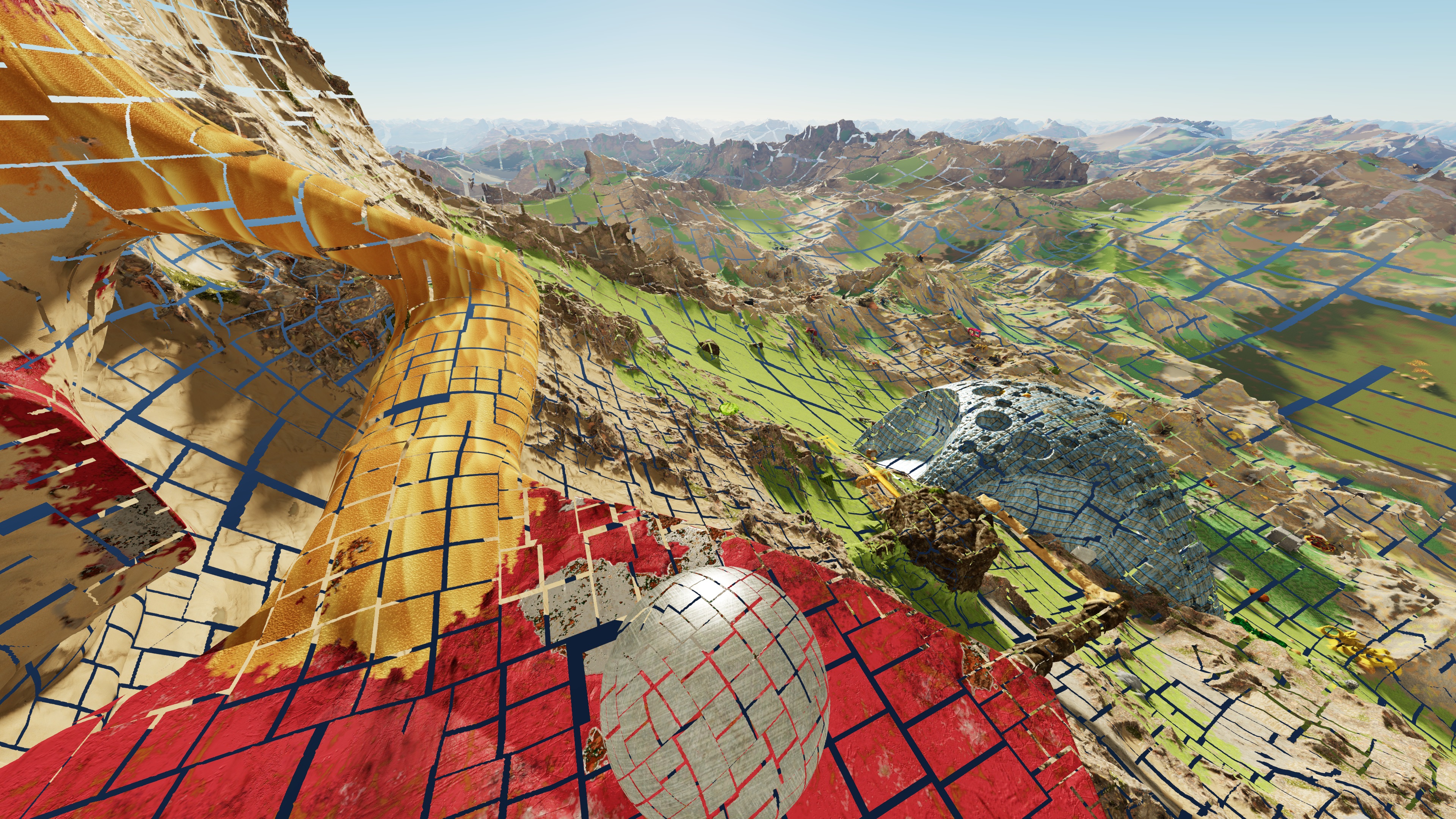

My scene is represented with triangular patches, each containing perhaps 500 to 2000 triangles. They are approximately equal size in screen space.

I’ve scaled the patches down here so that we can see gaps between them

Basically we want to shoot rays from the viewers eye to each patch.

Because our scene is represented with a signed distance field, we always know the distance to the nearest surface.

If there is ever a point along the ray where we are inside of something, and the distance to the surface is sufficient to occlude our patch, we know the patch isn’t visible.

Optimizing to make it practical

The number of patches depends on the settings, but a typical number after frustum culling might be 10,000.

Shooting 10,000 rays through complex SDFs is quite slow, thankfully it is turns out that each frame is generally very similar to the previous, so we can take advantage of temporal stability.

Typically I only end up tracing around 100 rays per frame.

This is done by tracking previous occlusion results, and storing a sphere that represents the most occluded point.

The next time around, for patches that were occluded, we can quickly retest them without needing the SDF, by using a sphere occludes sphere test.

If that fails, we can still optimize by starting the ray test near the point of previous occlusion.

For patches that were previously not occluded, we can actually perform a fairly similar test, if there hasn’t been enough relative movement, there is no need to test the SDF, and we can assume the object is visible.

I differentiate between dynamic and static parts of the scene, as these temporal tests only work reliable for the static part.

I also use a timer to set a max time that this tracing process can run per frame.

Other Benefits

I track an occlusion ratio for each patch, this is between 0 and 1.

So even if something isn’t completely occluded I can still tell when it is partially occluded.

I use this occlusion ratio to adjust the priority for patch splitting and generation.

- Patches that are completely occluded are never split.

- Patches that are partially occluded, have a much lower score/priority, and won’t split until the user gets closer.

Future Work

Dynamic objects are occluded by static objects, but I haven’t yet added the code for dynamic objects to occlude anything. It isn’t terribly difficult, but I don’t think it will contribute much so I haven’t prioritized that work.

Potential downsides

- While this algorithm works well for me, this is largely due to the precise way that may engine works, it is probably not well suited to a more typical game engine like Unreal which does not work based on equally sized in screen space triangular patches.

- Thin objects don’t occlude as well as a software rasterizer based implementation, this is because it relies on the object having volume

- Occluder fusion isn’t the best